It's a new Multimodal Large Language Model (MLLM).

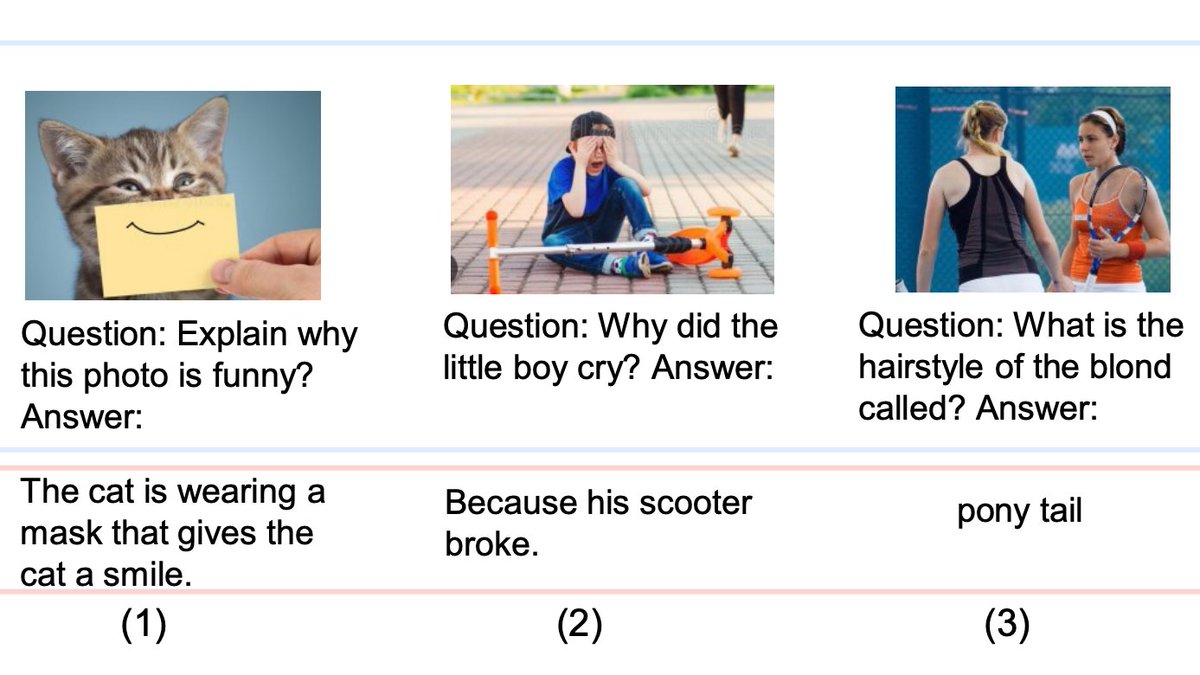

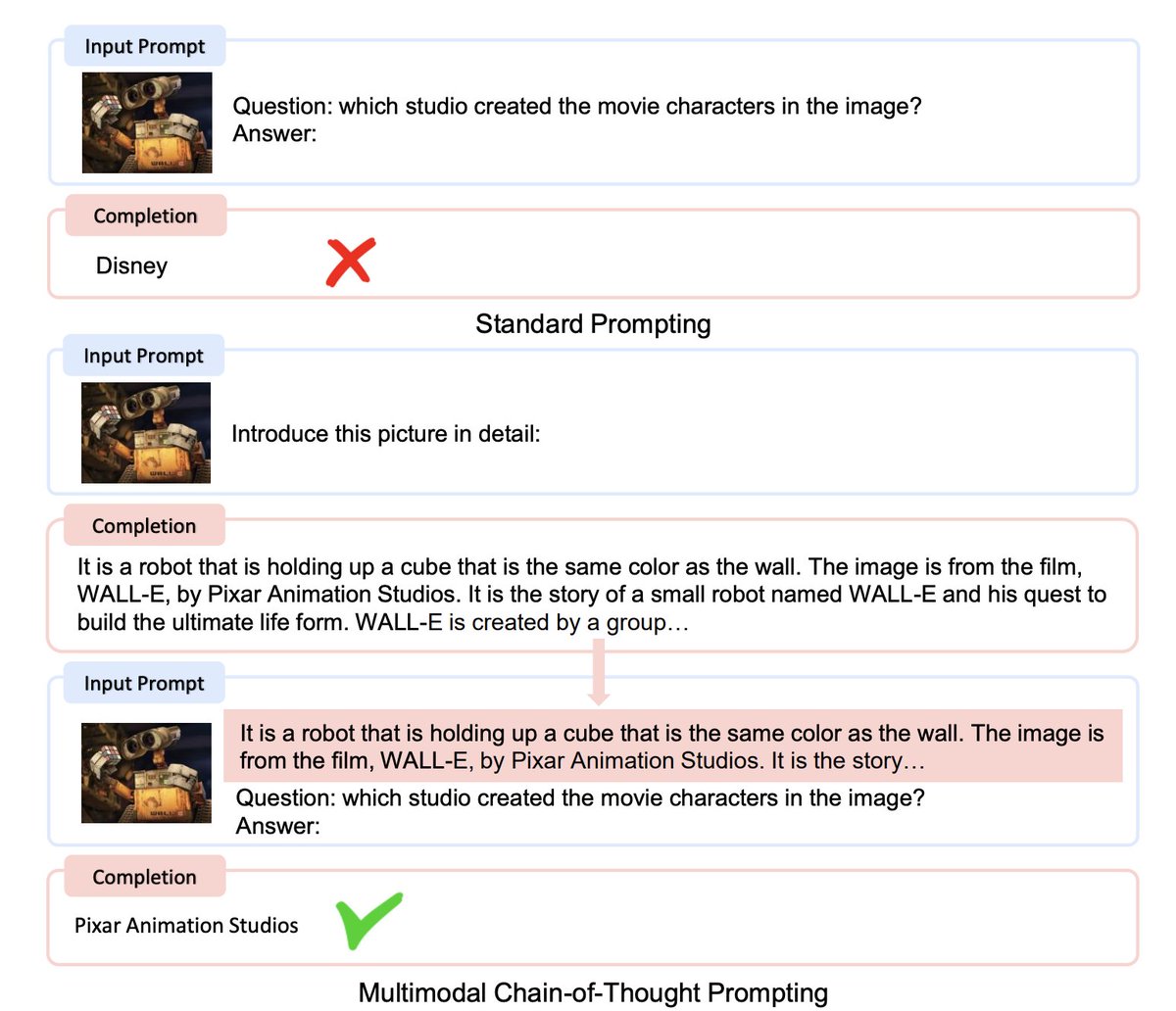

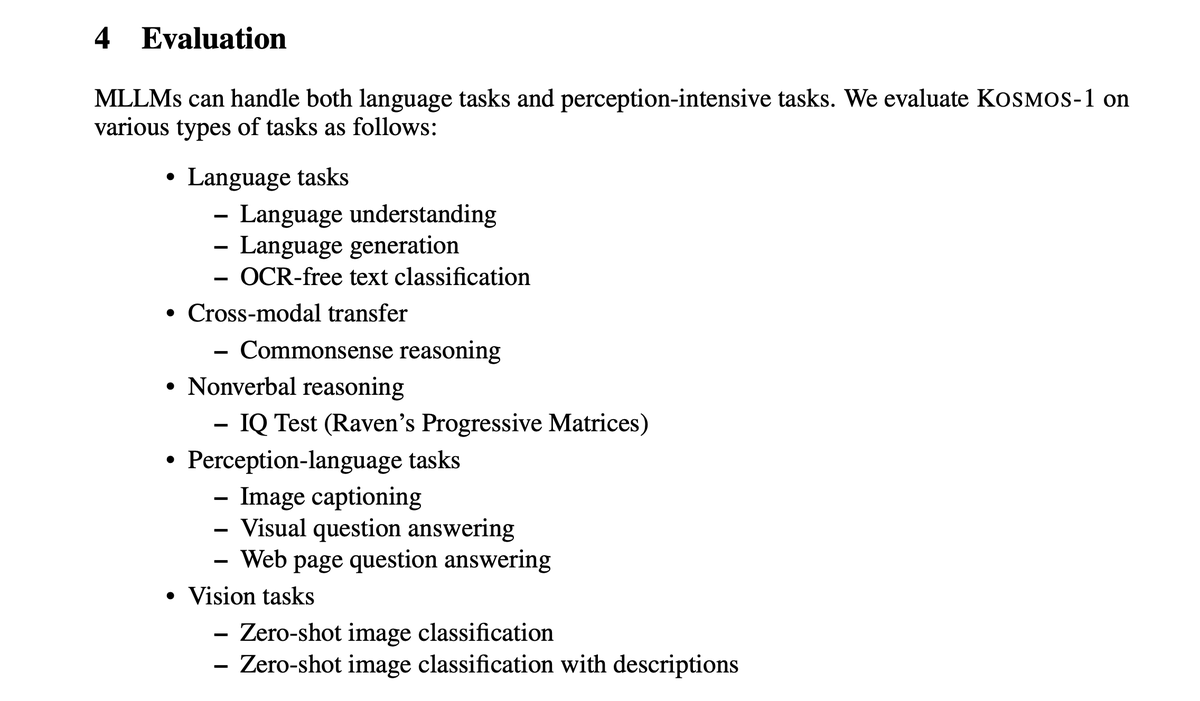

Their model can understand images, text, images with text, OCR, image captioning, visual QA.

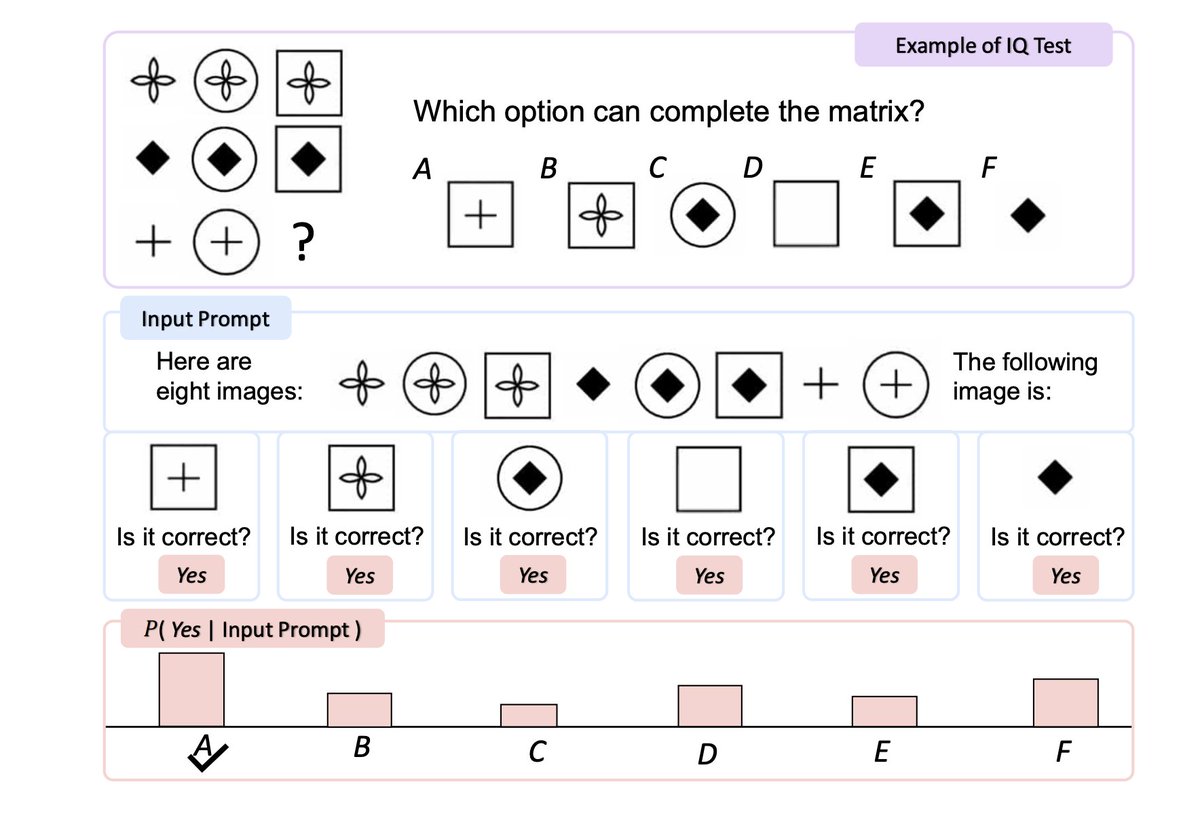

It can even solve IQ tests.

Paper: arxiv.org

Code: github.com

This is an example of Kosmos-1 solving a visual IQ test.

We use ML to identify the top papers, news, and repos. It's read by 50,000+ engineers and researchers.

alphasignal.ai

@ShaohanHuang, @donglixp, Wenhui Wang, Yaru Hao, @saksham_singhal, Shuming Ma, Tengchao Lv, @wolfshowme, Owais Khan Mohammed, Qiang Liu, Kriti Aggarwal, Zewen Chi, Johan Bjorck, @vishrav, Subhojit Som, Xia Song, Furu Wei

More from this author

GoogleAI just released "Muse", a text-to-image generation/editing model via Masked Generative Transformers: - Achieves new SOTA - Zero-shot, Mask-fre...

Game changer. You can now run GPT locally on your macbook with GPT4All, a new 7B LLM based on LLaMa. It's completely open source: demo, data and cod...

Impressive. MetaGPT is about to reach 10,000 stars on Github. It's a Multi-Agent Framework that can behave as an engineer, product manager, architec...

ChatGPT is taking over the internet. But do you know how it actually works? It's so clever. 🧵Here's an explanation using simple words:

Recent Threads

I’ve recently seen a lot of people criticizing smaller indie projects for releasing merchandise or doing kickstarter funding to fund their projects. T...

I compiled all the specific references I noticed in May's moveset! #イニブ #g_bd #g_bdr #GBDR https://t.co/PvNHzH6yj6

taekook taguan ng anak au wherein jk received a surprising gift from their xmas party… [ christmas special 🎄] https://t.co/WY3C450KpV

@HitWithAHeart I hear him before I see him. The weight of his steps on the stairs. Slower than usual. Measured. Like he’s already bracing for whatev...

(1/7) I'm not going to do a full trailer breakdown for Zach Cregger's Resident Evil film, since we have an early form of the script you can place a lo...

Nikola Jokic is 0-6 against 50+ win teams in the playoffs. https://t.co/l5hCeVCoUj

Popular Threads

A thread: Pakistani newspaper Dawn's front pages from 4th december 1971 to 20 December to see how they kept their own people in the dark. This was on...

DON'T ARGUE WITH DONKEYS (Fable) The donkey said to the tiger: - "The grass is blue". The tiger replied: - "No, the grass is green." The discussion...

Top-40 Footballers with Most Goal Contributions (Goals + Assists) in history. [ A MEGA THREAD ] https://t.co/gAb3QcqdYQ

Winning the Chevening Scholarship + 12 Strong Samples of the Chevening Essay There are four important Essays on the Chevening Scholarship application...

Top 20 Players with the most goals + assists in football history, only players with assists available (following the Opta criteria for assists) Seaso...

These are the 41 most powerful tenants of Tateism for a man to live by. Wether you are Christian, Muslim; It doesn’t matter if you are strong, weak...