🧵

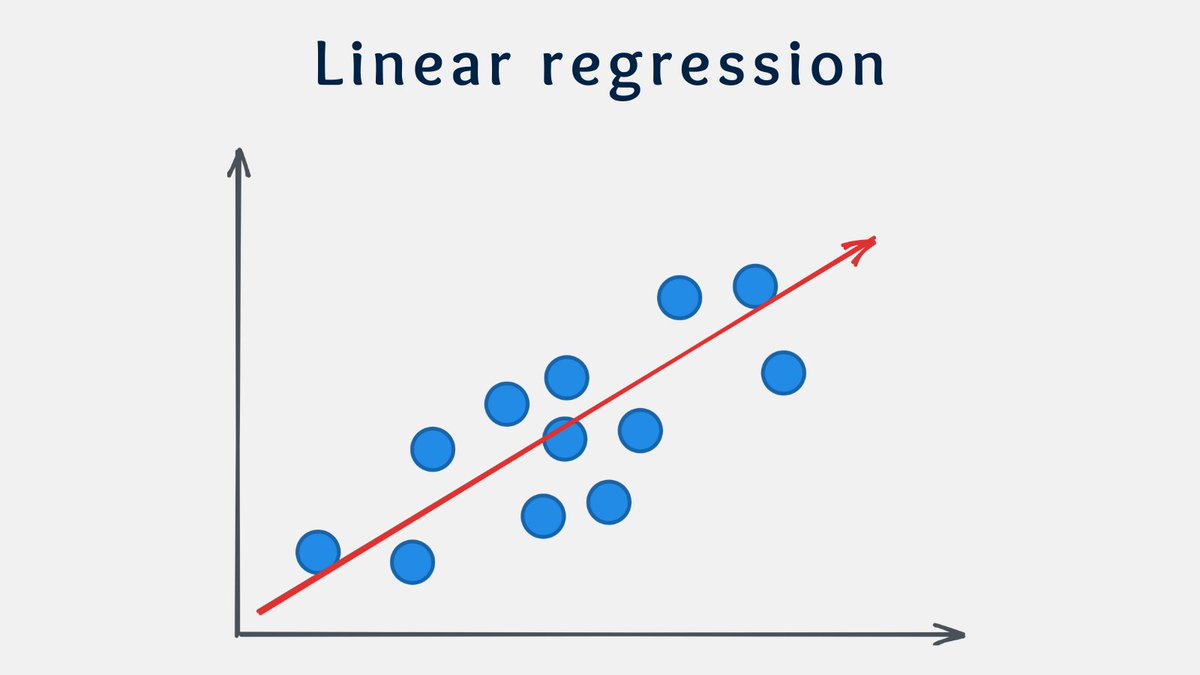

Linear regression is the most fundamental and widely used regression algorithm.

It assumes a linear relationship between the variables.

The goal is to find the best-fitting line that minimizes the errors between the predicted and actual values.

Polynomial regression allows for nonlinear relationships between variables.

It adds polynomial functions to the line equation, so it can capture more complex patterns in the data.

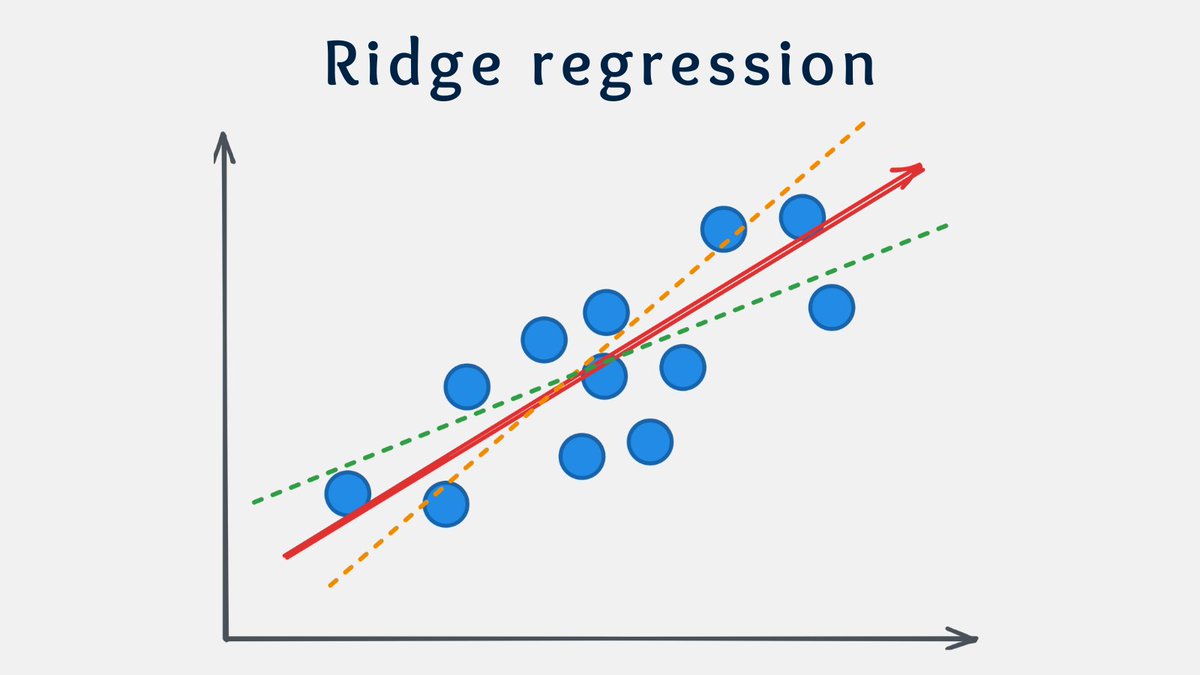

Ridge regression is useful when dealing with high-dimensional datasets.

Why?

It adds a penalty term to the linear regression cost function, which helps reduce the impact of irrelevant variables.

It is more robust to collinearity and overfitting.

Lasso regression is similar to Ridge, but it can exclude useless variables from equations.

It is particularly valuable when the number of predictors is large relative to the number of observations.

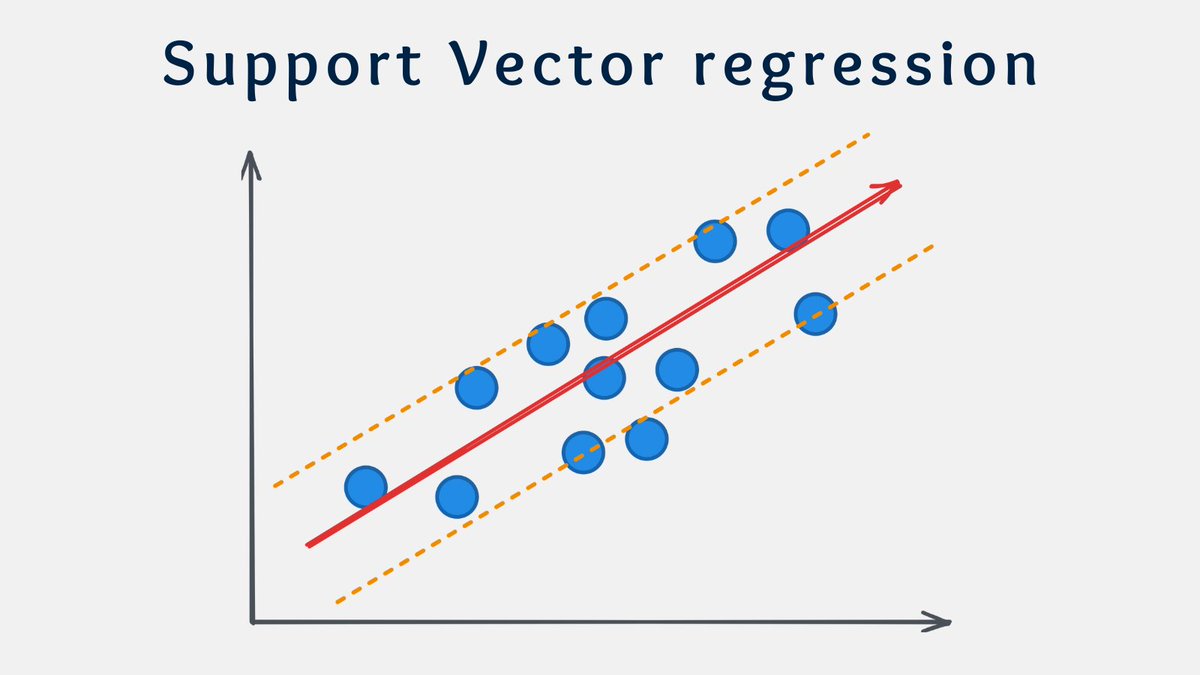

SVR aims to find a hyperplane that maximizes the margin around the predicted values.

SVR is based on the principles of support vector machines.

It is particularly effective when dealing with datasets with nonlinearity and outliers.

I hope you've found this thread helpful.

Like/Retweet the first tweet below for support and follow @levikul09 for more Data Science threads.

Thanks 😉

We share:

• Interviews

• Podcast notes

• Learning resources

• Interesting collections of content

dsboost.dev

More from this author

R² is a widely used measure of fit, but for many analysts, it is just a number. They believe high R² ➡...

5 activation functions you should know! 🧵 1/8 https://t.co/wO84nkE5tJ

There are 3 types of means. But what is the difference between them? An introduction to the arithmetic, geometric, and harmonic mean. https://t.co/h...

5 Regression Algorithms you should know 🧵 https://t.co/Q8Z0IXhnR9

Recent Threads

I’ve recently seen a lot of people criticizing smaller indie projects for releasing merchandise or doing kickstarter funding to fund their projects. T...

I compiled all the specific references I noticed in May's moveset! #イニブ #g_bd #g_bdr #GBDR https://t.co/PvNHzH6yj6

taekook taguan ng anak au wherein jk received a surprising gift from their xmas party… [ christmas special 🎄] https://t.co/WY3C450KpV

@HitWithAHeart I hear him before I see him. The weight of his steps on the stairs. Slower than usual. Measured. Like he’s already bracing for whatev...

(1/7) I'm not going to do a full trailer breakdown for Zach Cregger's Resident Evil film, since we have an early form of the script you can place a lo...

Nikola Jokic is 0-6 against 50+ win teams in the playoffs. https://t.co/l5hCeVCoUj

Popular Threads

A thread: Pakistani newspaper Dawn's front pages from 4th december 1971 to 20 December to see how they kept their own people in the dark. This was on...

ICT’s 2022 Mentorship Summarized: https://t.co/zFJCgIfDAR

Top-40 Footballers with Most Goal Contributions (Goals + Assists) in history. [ A MEGA THREAD ] https://t.co/gAb3QcqdYQ

1. There are more people added on the list of arrests and executions of famous people but no further intel is available at this time. ARRESTS and EXE...

The ICT Mentorship Core Content Month 1 Summarized: https://t.co/6tXJxPMDhm

Here's 40 TikTok hooks that could make you go viral. (Not in any particular order) //THREAD//